8

« on: April 26, 2020, 07:50:09 am »

I had a feeling that is what you meant. Here is the command I am using to launch the VM.

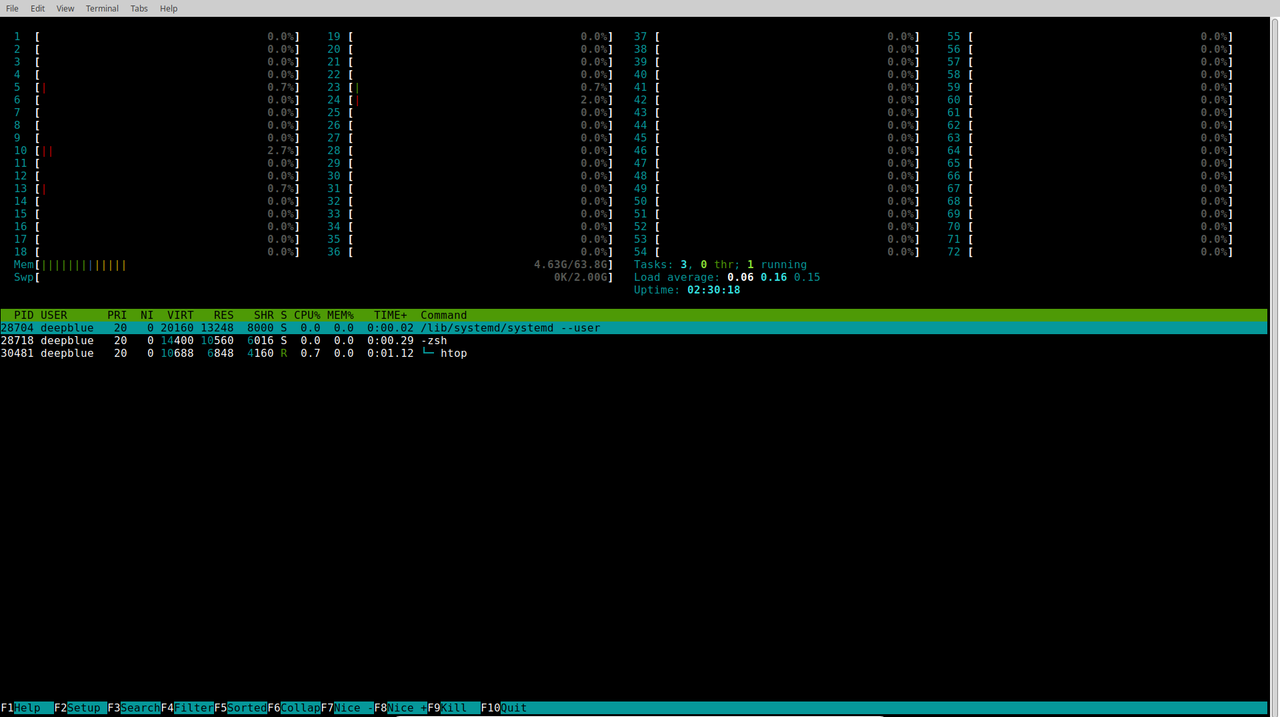

/usr/bin/qemu-system-x86_64 -name guest=The_Dude,debug-threads=on -S -object secret,id=masterKey0,format=raw,file=/var/lib/libvirt/qemu/domain-9-The_Dude/master-key.aes -machine pc-i440fx-bionic,accel=tcg,usb=off,dump-guest-core=off -cpu kvm32 -m 256 -realtime mlock=off -smp 4,sockets=4,cores=1,threads=1 -uuid cd817648-4846-42d4-936c-10a13375295a -no-user-config -nodefaults -chardev socket,id=charmonitor,path=/var/lib/libvirt/qemu/domain-9-The_Dude/monitor.sock,server,nowait -mon chardev=charmonitor,id=monitor,mode=control -rtc base=utc,driftfix=slew -no-kvm-pit-reinjection -no-hpet -no-shutdown -global PIIX4_PM.disable_s3=1 -global PIIX4_PM.disable_s4=1 -boot menu=on,strict=on -device ich9-usb-ehci1,id=usb,bus=pci.0,addr=0x4.0x7 -device ich9-usb-uhci1,masterbus=usb.0,firstport=0,bus=pci.0,multifunction=on,addr=0x4 -device ich9-usb-uhci2,masterbus=usb.0,firstport=2,bus=pci.0,addr=0x4.0x1 -device ich9-usb-uhci3,masterbus=usb.0,firstport=4,bus=pci.0,addr=0x4.0x2 -drive file=/ssd_01/vms/The Dude.vdi,format=vdi,if=none,id=drive-ide0-1-0 -device ide-hd,bus=ide.1,unit=0,drive=drive-ide0-1-0,id=ide0-1-0,bootindex=1 -drive file=/usb_01/samba/Software/mikrotik-6.45.3.iso,format=raw,if=none,id=drive-ide0-1-1,readonly=on -device ide-cd,bus=ide.1,unit=1,drive=drive-ide0-1-1,id=ide0-1-1 -netdev tap,fd=25,id=hostnet0 -device e1000,netdev=hostnet0,id=net0,mac=52:54:00:d3:2c:ea,bus=pci.0,addr=0x3 -netdev tap,fd=28,id=hostnet1 -device e1000,netdev=hostnet1,id=net1,mac=52:54:00:87:a4:b6,bus=pci.0,addr=0x6 -chardev pty,id=charserial0 -device isa-serial,chardev=charserial0,id=serial0 -vnc 127.0.0.1:0,password -device cirrus-vga,id=video0,bus=pci.0,addr=0x2 -device virtio-balloon-pci,id=balloon0,bus=pci.0,addr=0x5 -msg timestamp=on

Edit: I am also using the pre-built QEMU and KVM packages using apt-get